Navigating the Complexity: Introduction to Explainable Machine Learning

In the intricate landscape of machine learning, Explainable Machine Learning (XAI) emerges as a crucial concept. This article delves into the significance of explainability in machine learning models, exploring its applications, challenges, and the transformative impact it can have on industries and decision-making processes.

The Essence of Explainability: Shedding Light on Black Box Models

Explainable Machine Learning aims to demystify the often opaque nature of complex machine learning models. Traditional models, particularly deep neural networks, are often referred to as “black boxes” due to their inability to provide clear insights into decision-making processes. XAI strives to unravel these black boxes, making machine learning models more interpretable and accountable.

Applications Across Industries: From Healthcare to Finance

Explainability in machine learning finds applications across diverse industries. In healthcare, interpretable models are crucial for providing transparent insights into diagnostic decisions. In finance, understanding the rationale behind algorithmic predictions is essential for regulatory compliance and risk management. The applications extend to fields like criminal justice, where explainability is vital for ethical decision-making.

Enhancing Trust: The Connection Between Explainability and Trustworthiness

Trust is a foundational element in adopting machine learning solutions. Explainable Machine Learning plays a pivotal role in building and enhancing trust. When stakeholders, whether they be clinicians, financial analysts, or end-users, can comprehend how a model arrives at a decision, trust in the technology grows. This trust is essential for widespread adoption and acceptance.

Human-AI Collaboration: Fostering Collaboration and Understanding

Explainability fosters collaboration between humans and AI systems. When users can understand the reasoning behind AI-generated insights, they become more willing to collaborate with the technology. This collaboration is especially crucial in settings where decisions impact individuals’ lives, and a clear understanding of the decision-making process is essential.

Challenges in Achieving Explainability: Striking the Right Balance

While the benefits of explainability are evident, achieving it comes with challenges. Striking the right balance between simplicity and accuracy is a perpetual challenge. Simplifying models too much may compromise accuracy, while overly complex explanations may become difficult for users to comprehend. The challenge lies in finding the sweet spot that ensures both accuracy and interpretability.

Interpretable Models vs. Model-agnostic Techniques: The Diverse Approaches to XAI

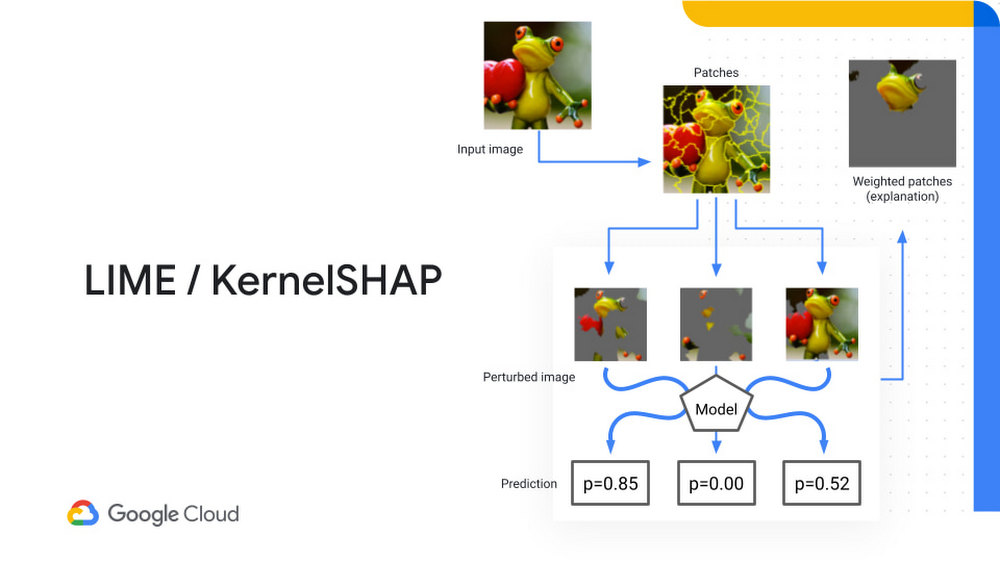

Explainable Machine Learning employs various approaches, from interpretable models to model-agnostic techniques. Interpretable models, such as decision trees or linear models, inherently provide explanations. On the other hand, model-agnostic techniques, like LIME (Local Interpretable Model-agnostic Explanations), aim to explain the predictions of any black-box model by approximating it locally.

Regulatory Landscape: The Growing Importance of Explainability in Regulations

The regulatory landscape is evolving to recognize the importance of explainability. In sectors like finance and healthcare, regulatory bodies are incorporating guidelines that mandate the use of interpretable machine learning models. This reflects a growing acknowledgment of the need for transparency and accountability in algorithmic decision-making.

Explainability in the Era of Big Data: Navigating Complex Data Landscapes

In the era of Big Data, where machine learning models operate on vast datasets, achieving explainability becomes even more critical. Complex data landscapes pose challenges, but they also underscore the importance of providing clear explanations. Understanding how algorithms interpret and use large volumes of data is crucial for maintaining ethical standards and ensuring responsible AI deployment.

Explore the Advancements: Explainable Machine Learning at www.misuperweb.net

For a deeper exploration of the advancements in Explainable Machine Learning, visit Explainable Machine Learning. The website offers insights, resources, and updates on the latest developments in explainability techniques. Explore how XAI is reshaping the landscape of machine learning and fostering a more transparent and accountable AI future.

Conclusion: Illuminating the Future of Machine Learning

In conclusion, Explainable Machine Learning illuminates the path forward in the realm of machine learning. By providing insights into decision-making processes, enhancing trust, and fostering collaboration, XAI contributes to a future where machine learning is not only powerful but also interpretable and accountable. As technology continues to advance, the pursuit of explainability stands as a cornerstone for ethical and responsible AI deployment.